Constraint-Aware Importance Estimation for Global Filter Pruning Under Multiple Resource Constraints

Abstract

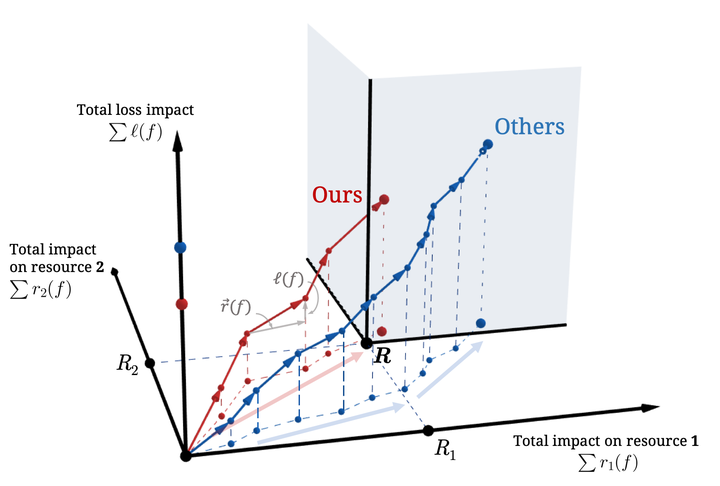

Filter pruning is an efficient way to structurally remove the redundant parameters in convolutional neural network, where at the same time reduces the computation, memory storage and transfer cost. Recent state-of-the-art methods globally estimate the importance of each filter based on its impact to the loss and iteratively remove those with smaller values until the pruned network meets some resource constraints, such as the commonly used number (or ratio) of filter left. However, when there is a more practical constraint like the total number of FLOPs, they ignore its relation to the estimation of filter importance. We propose a novel method called Constraint-Aware Importance Estimation (CAIE) that integrates information of the impact on the given resource into the original importance estimation only based on loss when pruning each filter. Moreover, our CAIE can be generalized to the pruning problem under multiple resource constraints simultaneously. Extensive experiments show that under the same multiple resource constraints, the model pruned with our CAIE method can not only accurately meet the constraints but also achieve the optimal performance results when comparing to existing state-of-the-art methods.